In part 1 and part 2 of this blog series, we presented the topic of data security in data platforms, and analyzed a high-level architecture as well as the suitability of Exasol. This last part deals in detail with the Protegrity platform and its interaction with Exasol.

The Protegrity platform is meant to achieve extensive, end-to-end data protection over various applications and data storage devices:

Source: Protegrity 09/2021

Via a central policy server (enterprise security administrator - ESA), various protective mechanisms (policy tokenization, encryption, data masking and anonymization) are linked to user groups, data elements and data stores, thus allowing a large number of data protectors to be configured.

Users and their groups, policies, etc. are thus defined centrally for a variety of data technologies such as

databases

file systems,

APIs

and others. Log information for data access is also collected and processed centrally here.

For use of the combination of Exasol and Protegrity, the steps briefly outlined next are thus necessary in addition to the necessary licenses also refer to here:

1. Installation of Exasol and Protegrity

Both solutions are delivered as software appliances, so that installation is usually limited to password creation for users and OS configuration. The subject of networks plays a central role here to ensure, firstly, accessibility of Exasol and ESA, and secondly, communications between the database and Protegrity solution. For the first steps including an initial test, both software solutions can also be easily installed on small virtual machines (such as on a laptop). For intensive operations in production mode, however, thorough planning with regard to backups and high availability scenarios is recommended. The sizing of an Exasol cluster and the ESA instance depends on the data volume, number of users and auditing requirements.

2. Installation of the Protegrity plug-in on the Exasol cluster:

Needed for use of the Protegrity solution within Exasol is installation of a plug-in which ensures secure and encrypted communication between Exasol and Protegrity. In this process, configurations are retrieved from the ESA, and log data are sent to it. Note that an active connection between Exasol and Protegrity is not needed for encryption, masking etc. – but is needed for sending log data. Plug-in installation here comprises simple upload of a corresponding archive via the Exasol administration console.

3. Configuration of Protegrity via an ESA web front-end:

a) Exaso data store: Here Exasol is made known as a datastore (IP addresses of the nodes).

b) Roles and user sources: The data source for users and roles relevant to encryption and decryption is now configured. This can be a file, database (various databases are supported as sources), active directory or LDAP. The users are those who will later be involved in database utilization. However, it is also possible to subsequently define default behaviour for unknown users, so that the number of database users can be significantly higher; maintained/administered within the ESA are only the users actually allowed to de- and/or encrypt, while unknown users receive default behaviour. Roles serve to implement and administer behaviour with regard to data security at the group level. In the following example, the users "JOHN" and "SYS" are accordingly assigned to the BusinessAnalyst group, while the Fallback group currently has no members. Roles are also assigned to data sources and policies at this point. More specifically, for example:

Role | Member | Data stores | Policies |

BusinessAnalyst | JOHN, SYS | Exasol | DataSecurity |

Fallback | - | Exasol | DataSecurity |

c) Data elements and masks: Defined in this context are the respective ways of handling different data elements from the perspective of security:

Is it a structured data store (database, hive, API etc.) or an unstructured store (file system etc.)?

Which obfuscation algorithm should be used (tokenization, 3DES, AES-256 etc.)?

Which parameters are to be passed to Protegrity for encryption/obfuscation? Which places should be preserved, and which should be obfuscated etc.? For example, receipt of the first 2-3 digits would be a sensible scenario for postal codes, in order to provide all data users with GDPR-compliant information.

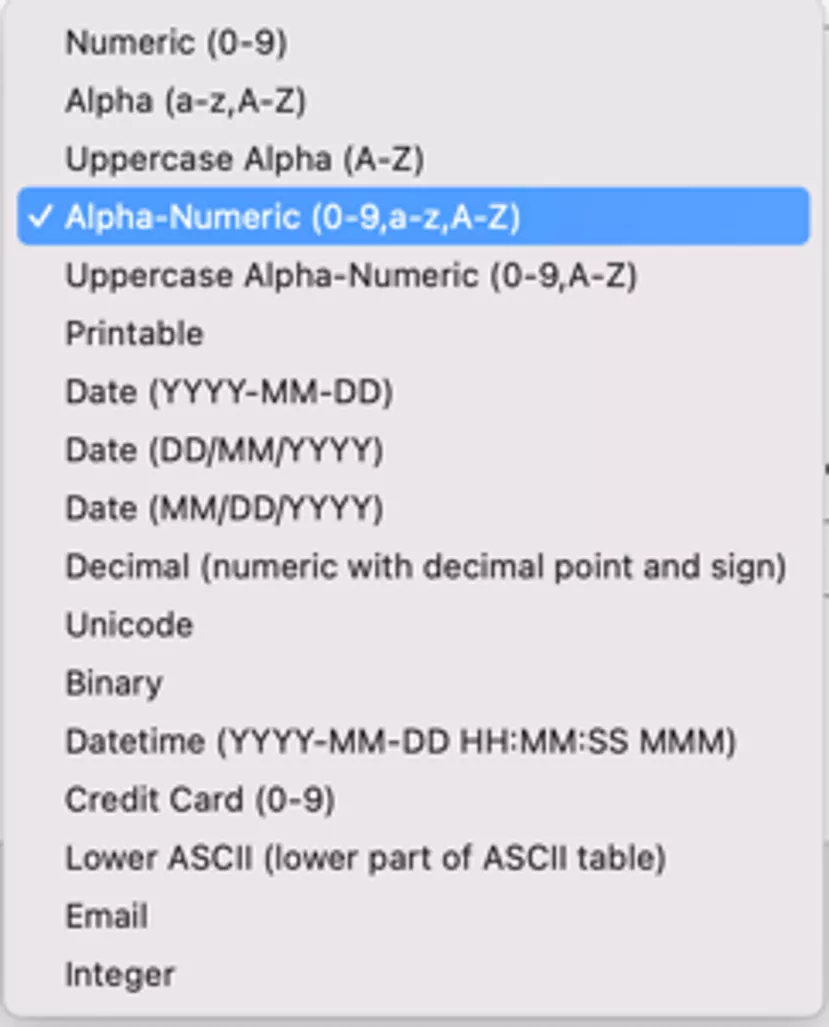

Protegrity offers numerous setting options here. However, we will next use only one "AlphaNumeric" data element defined as follows:

Accordingly, alphanumeric data elements are to be obfuscated with a tokenizer. Here we want to obfuscate the entire attribute as well as its length. Revealed here, too, is the remarkable strength of the Protegrity software which supports a whole range of data types:

This enables "simple" installation of the Protegrity software by allowing data types to be preserved despite obfuscation, so that applications do not have to implement any special treatment of obfuscated data elements.

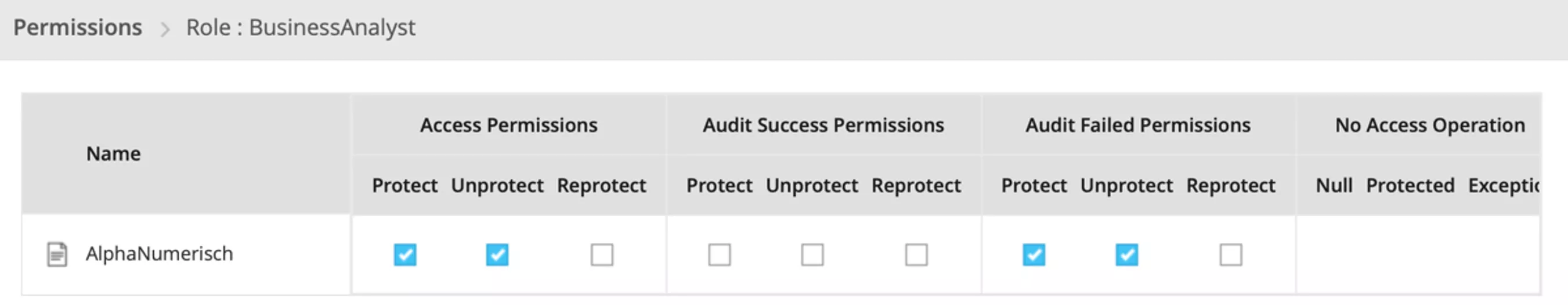

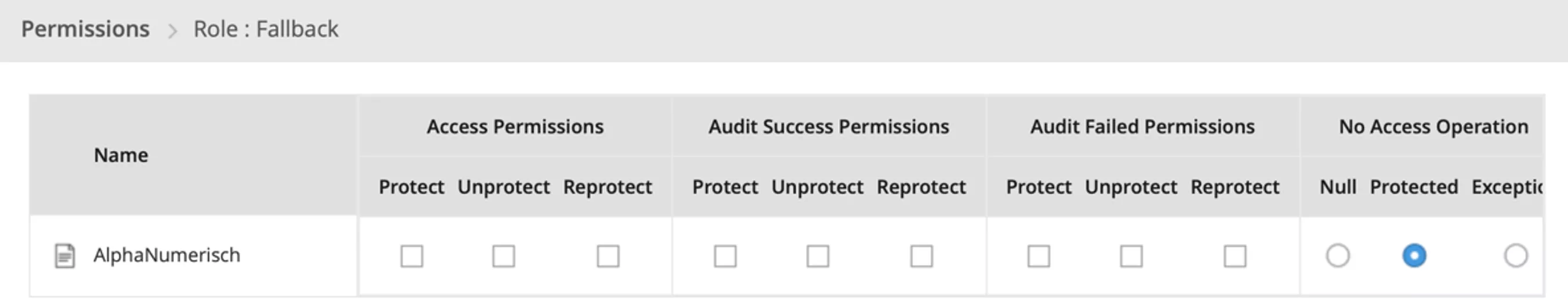

d) Policies und permissions: Determined in a final step are policies and permissions which define how data elements, roles and data stores interact, and which permissions concerning data elements roles possess. In addition, the "deployed" / policy is delivered to Exasol or another data store at this stage. Determined here, so to speak, is the behaviour of the data security mechanism in certain constellations:

The Business Analyst role is permitted to invoke Protect and Unprotect with respect to data elements. Failed utilizations of Protect / Unprotect are to be logged.

The Fallback role is designed such that data elements are only returned in Protected mode.

4. Linking Exasol to ESA

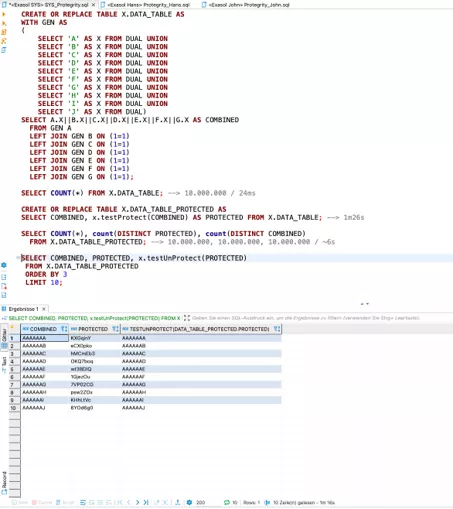

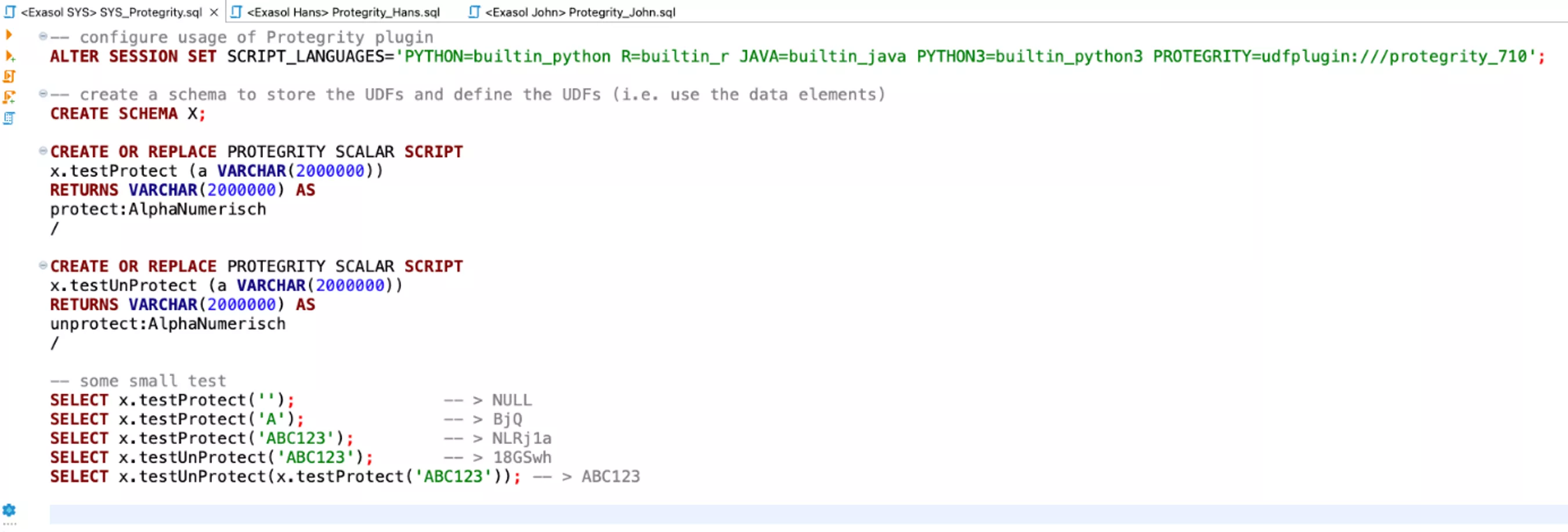

After policies have been configured in ESA, still needed for use within Exasol is implementation of the corresponding UDFs for each data element, and their availability for users of the database. The following script is intended to illustrate such an implementation using the "AlphaNumeric" data element:

It becomes evident that Protegrity is used purely with scripts and application of UDFs in SQL. For the end user JOHN, application is just as simple:

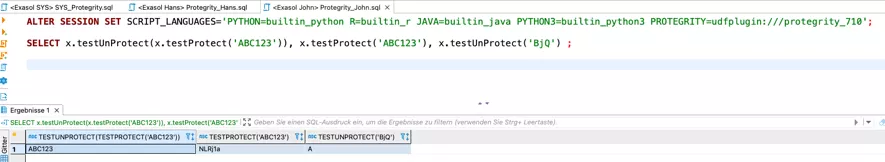

It becomes interesting when a user HANS, assigned the appropriate execution rights, tries to decrypt or encrypt data:

In the first case (encryption) there is an error message; in the second case, the encrypted value is simply returned.

Finally, Protegrity offers a variety of possible "behaviours" which must be discussed and ultimately defined analogously to roles and rights within a database. From our point of view, the most important feature here is that Protegrity makes it is very easy to implement such data protection in fine granularity. In other words, not only individual database attributes can be treated separately (via definitions of data elements for e-mail, telephone number, name, date of birth, credit card number etc.), but so can different user groups.

Of course, such decryption and encryption does not take place without expenditure, i.e. it can result in an overhead for certain ETL workloads. The following screenshot of a sample performance test serves as information but should not be considered typical:

Please note:

a) The test was carried out on one of our b.telligent laptops, on which the Exasol VM, the ESA VM, DBeaver, Word, Outlook and Teams were open in addition to Virtual Box. This means that during actual productive operations with a real Exasol cluster (e.g. 5 nodes with 4 cores), it is quite possible to achieve a factor of 20 (i.e. we can assume less than 10 seconds of overhead instead of 1m16s for encryption of one attribute and 10,000,000 records).

b) Encryption of data / attributes takes place only when data are stored, so the performance for 10 million data records is to be regarded as very good because here too, not all records are continuously rewritten.

c) Decryption usually does not take place continuously either, only for selected evaluations and ETL processes, so that the overhead here is also quite negligible.

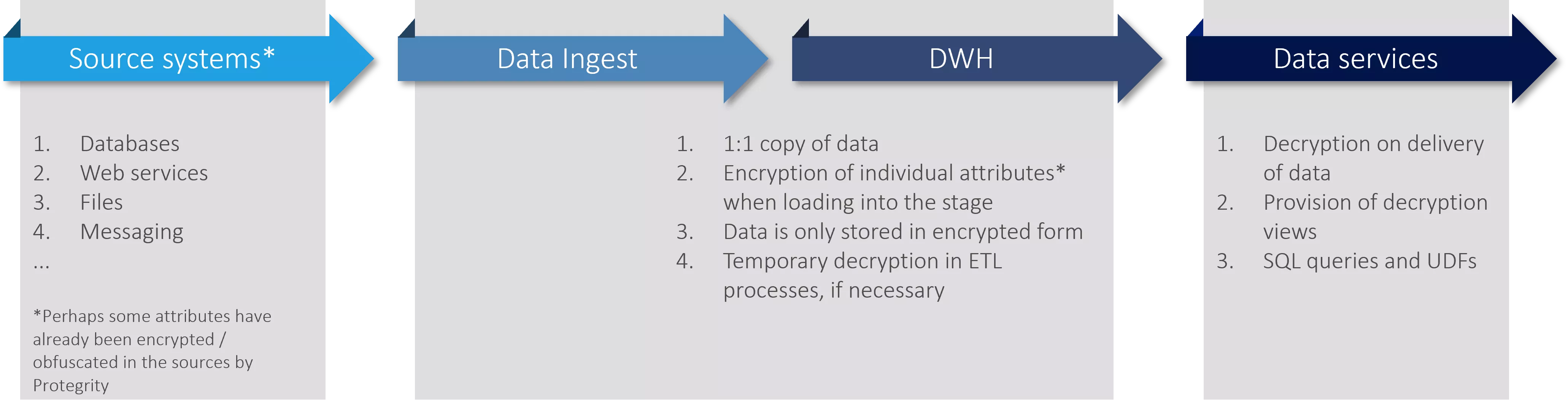

Logical data flow / use of Protegrity

Finally, here are a few thoughts on the use of Protegrity in ETL processes and introduction into existent DWH solutions. Using Protegrity in ETL and reporting is very simple:

a) Encrypt all attributes at the time of loading.

b) Decrypt individual attributes only when needed (access or ETL logic requiring data in raw form).

c) Store only encrypted/obfuscated attributes, so that Protegrity is responsible for full control of these data.

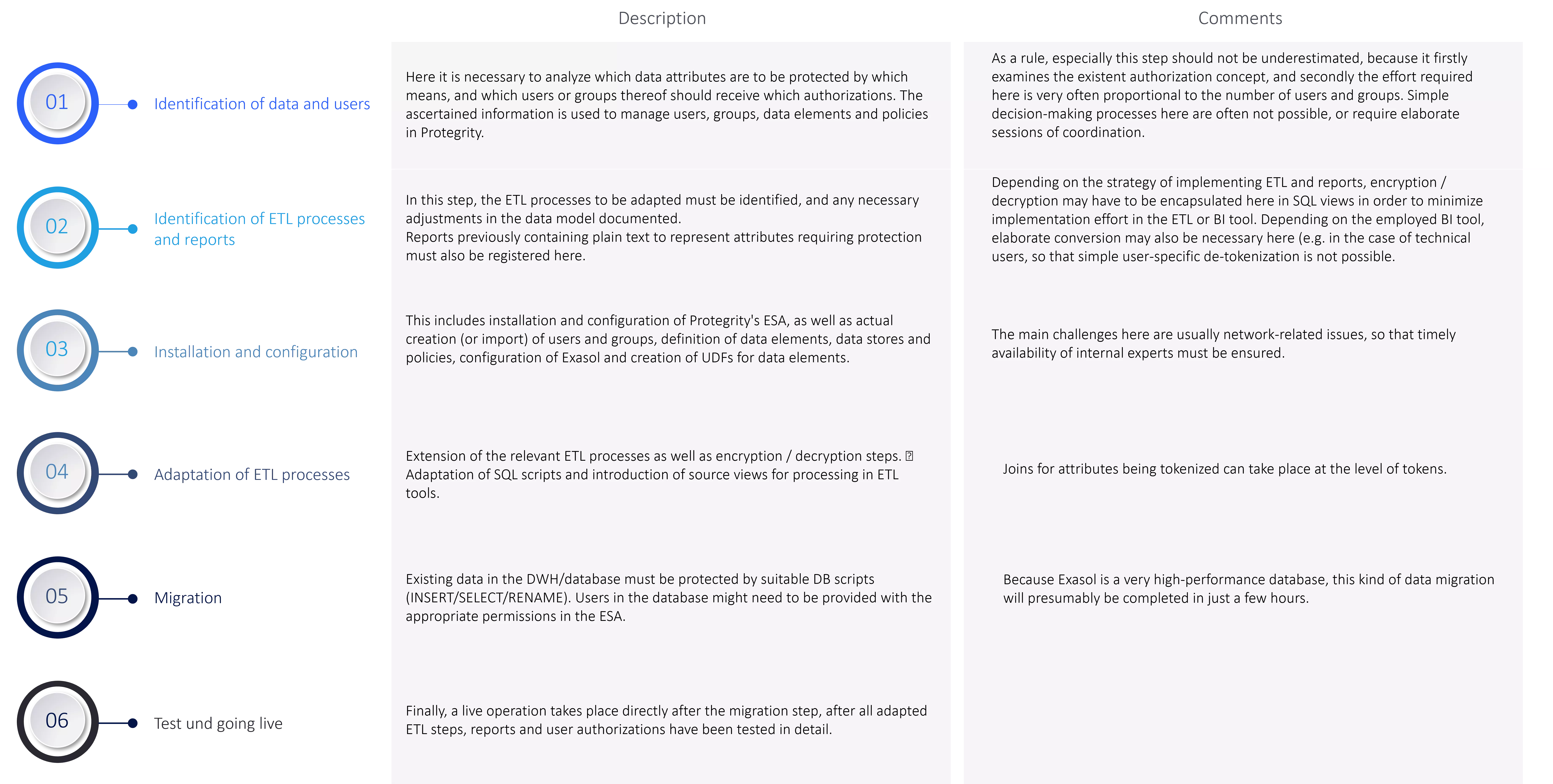

The steps described next are suitable as project stages for implementation of granular data protection within an existent DWH.

This is a draft of implementation. Additional, parallel streams of implementation or a broader introduction of the Protegrity solution may have to be included in real plans.

Summary

In this blog series, we described Protegrity and its interaction with the Exasol database. We thought it important here to outline a data protection solution in the context of DWH, and provide insights into such a solution. It is crucial to guarantee the dimensions comprising performance, availability and security through interaction between the Protegrity solution and the Exasol database, for example, with the ability to centrally control the security of sensitive data.

Of course, we look forward to having more detailed architectural and technical discussions - in particular, do not hesitate to contact us before developing your own solution in this area.