In this blog post, we'll be taking a look at Azure Machine Learning. Thanks to a graphical user interface that is both intuitive to operate and flexible, this tool is suitable for data science beginners as well as experts.

Source: Microsoft (https://studio.azureml.net/)

Microsoft now offers a range of services with Azure Cloud that were previously hosted on premise – that is, on the user's own servers. And there is a good machine learning tool from Microsoft for the cloud that does not require installation.

To work with the Microsoft Azure Machine Learning Studio, you just need to register on the site. You can then start working with the tool right away.

The "experiments" are free and there is no charge for CPU time. Only larger projects attract a charge for CPU time. The exact pricing is available from Microsoft here: https://azure.microsoft.com/en-gb/pricing/details/machine-learning/.

By the way, the screenshots shown here are always in English. The benefit for users is that, whenever they have a problem with an individual function, they can google the right term and get more hits.

Our first experiment

To demonstrate the features and user interface of AzureML Studio, I decided to use the Titanic dataset. This is the classic dataset for testing machine learning algorithms; it contains the following information about the passengers on the Titanic: name, age, booking class, gender and survival status.

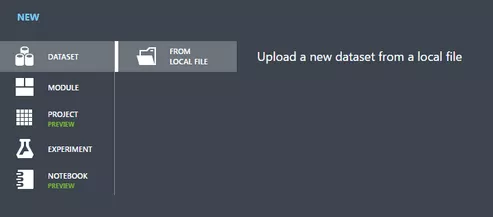

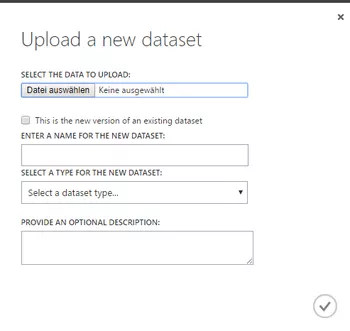

To upload a new record (as a csv file, for example), go to the Studio's dataset area then click "New" in the bottom left.

Then select "from local file" and enter the details of the file you wish to upload.

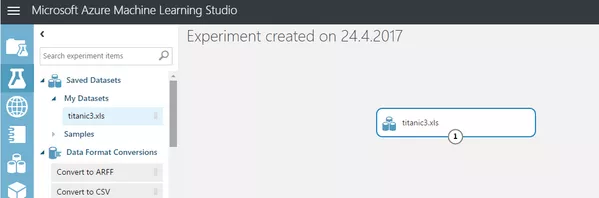

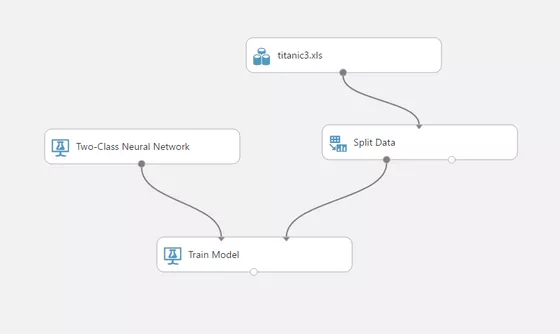

The new experiment can be run or generated from the left navigation bar. First, load the data into this screen by searching in "Saved Datasets" for the dataset you have just uploaded and dragging it across to the grey area.

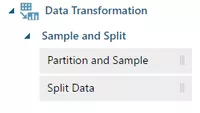

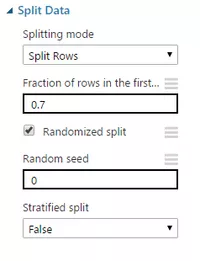

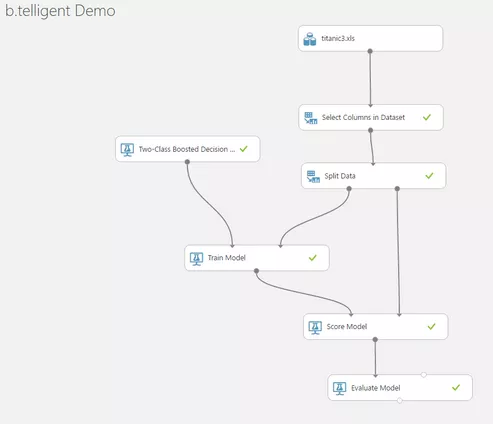

For the next steps, you will need data for training a model as well as for evaluating the model. You should therefore first split the data record into two parts. You will find the option for doing this under "Data Transformation".

Once you have dragged this option into the experiment, you can configure its properties. In this case, 70% of the data will be used for training the model and 30% for evaluating it later.

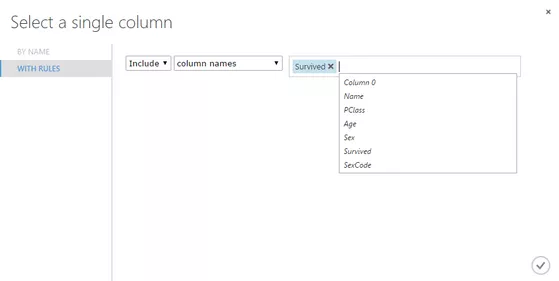

The next step is to look for a suitable model from the ones provided. We will use the "Two-Class Neural Network" and link it to the "Train Model" module. At this point, we also have to specify which single column in our data we'd like the model to predict. For the Titanic dataset upon which the model will be based, this is the "Survived" column.

You can still clean up the uploaded dataset before splitting it to ensure that only the really relevant data is filtered out. In this case, we will only exclude columns that are not relevant to the analysis (names, for example).

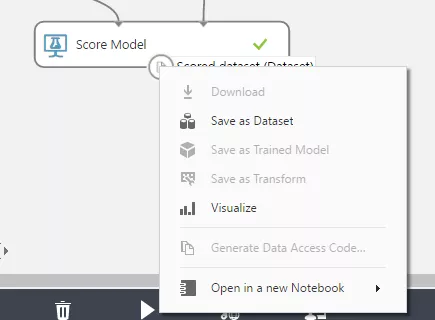

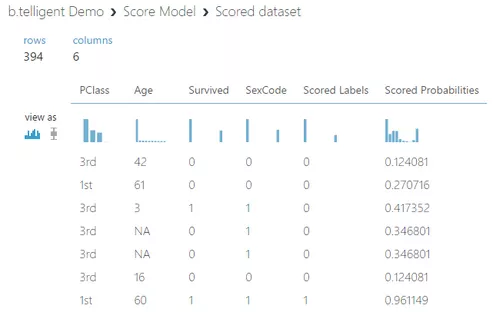

For a trial run with the other part of the data, add it along with the trained model to the "Score Model" module. After execution, which is initiated by clicking on "Run", you can visualize the results by right-clicking on the grey "Output" of the "Score Model" module.

At first glance, the rather low percentage figures of the results are surprising. Why does the trained model choose "not survived" even though the associated probability is less than 13 percent? The answer: because the probability of "survived" would have been even lower. Note that the probability of error "-1-probability" equates to: P (survived) ≠ 1 – P (not survived).

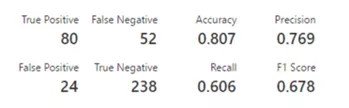

The execution of the model is evaluated statically in the "Evaluate Model" module. The data shown here is suitable for making comparisons with the results with other experiments. So you could keep everything the same but try a different model to see which gives better results.

Because the Titanic dataset is comparatively small, the results are relatively weak; the concept of "garbage in, garbage out" applies. In other words, the results cannot be better than the data upon which they are based.

The entire experiment ultimately made use of seven modules and can be carried out easily in about 30 minutes without the need for any high-level mathematics. It is also suitable for use as a template for other fairly simple projects.

It's an ideal way of trying out, learning about and using complex machine learning algorithms for data analysis – without having to invest in expensive hardware and software licences.