Ever since ChatGPT was introduced in late 2022, we have all been thrilled about the possibilities of generative AI and large language models (LLMs). What intrigues people is the incredible ease of generating high quality texts and getting responses to questions, code fragments, etc. You simply write a prompt, which is a text input, feed it to the ChatGPT’s API, and voilà, you have a response.

We are still very much in a generative AI hype cycle, where the benefits of a technology are typically overstated. For businesses, it is important to avoid the attendant pitfalls, and to understand when and how to best use ChatGPT or generative AI solutions. In this blog, we look beyond the hype, and show you an approach to evaluate and implement LLM-based Gen AI use cases.

How to evaluate and implement LLM use cases

Foundational LLMs are very large, deep neural networks, trained to learn useful language patterns based on petabytes of data from extremely large text collections. This broad approach, combined with the transformer neural network architecture, allows LLMs to be applied in many language-related business tasks. However, such versatility comes at a price. Compared to other, more task-specific AI solutions, there is a higher risk of getting poor quality results or generated outputs with factual errors. Hence, in a typical business use case, we need to build controls and safeguards around the LLM.

Let’s suppose you have a great LLM use case idea, and want to assess if and how it could be used in your company. This can be done with a structured approach involving four distinct steps:

- Business value and risk assessment of your use case

- Drafting processes for using the LLM and mitigating the risks

- LLM solution approach

- LLM selection

1. Business value and risk assessment

The potential business value is your starting point. Assess the potential benefits of the use case, like cost savings, efficiency improvements, and revenue growth. LLMs are usually applied in text-intensive tasks. Hence, the text volume, frequency of the task, and the human time invested are good places to begin the assessment. You must also carefully review the risks involved in using LLMs, e.g., GDPR and data security breaches, consequences of flawed responses, etc. Also, question whether the LLM needs to take your internal data into account and what the attendant risks are. In general, assess how failure tolerant your use case is. This has major implications for the business processes you need to build around the LLM itself. If the correctness and factuality requirements of the task are high, you need to build safeguards into the process that utilizes the LLM.

2. Draft the business process around the LLM

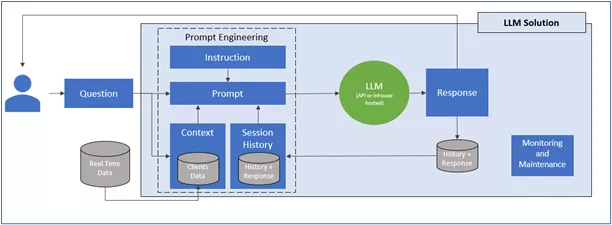

Next, draft the processes involving LLMs. Remember, the LLM is only a small part of the solution, the rest is in the pipeline, controls, and data flows that you will build around the LLM. Carefully think about the situation where the LLM is to be used, and the information sources you have available. Based on that, you can design a prompt engineering approach and LLM pipeline that creates the correct controlled input for the LLM. In addition, you need to design the post-processing processes for the response. And finally, do not forget to draft a process for quality controls and safeguards, and design how to improve the LLM model.

LLM Solution for Business Use

3. LLM solution approach

First of all, decide how much you want to rely on available solutions, versus building your own. This applies to all components of the complete LLM solution, i.e., the LLM itself, parts of its pipeline, external vs. internal data use, quality safeguards, and model maintenance. Then, choose where to host your LLM: do you want to use your own infrastructure or an existing cloud provider and, if so, which one? The right mix should be driven by your AI strategy, the costs for different approaches, and the skill level and AI maturity of your organization.

4. LLM selection

Finally, it is time to dive in and select a suitable LLM for your task: numerous LLMs are available, but many providers, big and small, are rushing to create new LLMs. You have a choice of different LLMs from main cloud platform providers like GCP, MS Azure, AWS, and from open-source alternatives in popular LLM repository sites like Huggingface (https://huggingface.co/).

There are a couple of key aspects to consider when choosing the LLM. The first is the type of LLM. We distinguish between foundational LLMs and more specific LLMs. Foundational LLMs are models designed and trained for all kinds of general text-based generative AI tasks. Specific LLMs are already fine-tuned for particular areas and topics. If your task fits one of the special topics, it’s preferable to assess that one first. The next key aspect to consider is the size of the LLM, typically measured by the number of parameters. Smaller LLMs are usually somewhat less accurate and less applicable in all domains. However, their application means less resources and lower costs. In general, go for the smallest LLM that matches your problem and offers the desired accuracy. This will save you money and effort. Also, you can fine-tune many existing LLMs with your own data, and thus create a customized LLM that best fits your goals.

Next steps?

Your overall AI strategy and maturity should shape your approach. If AI is a major transformational aspect of your business, you will probably need to go all-in and build in-house AI capabilities for LLMs. If you are on the gradual part of a learning curve, you could select 2–3 key LLM use cases and do a PoC to demonstrate the value of the approach, and thereby grow your involvement in AI step-by-step.

To find out more about b.telligent’s structured approach to help develop your business with LLMs and generative AI, feel free to contact me at jukka.hekanaho@btelligent.com, or reach out to me on LinkedIn.

Click here for my LinkedIn-Profil!