This is perhaps the most fundamental of all ABAP questions, and that not only in the context of high-performance lookups: it arises as soon as you do anything in ABAP.

The most commonly used type of internal table is the standard table. The reasons for this are partly historical, as hashed and sorted tables only became available with Release 4.0. Standard tables can also be rapidly populated, for each new data record can simply be added to the end of the table (see APPEND). For sorted and hashed tables, on the other hand, you first need to find the correct location (see INSERT) or generate the hash key. Standard tables are therefore to be preferred for writing.

For read access, however, standard tables are a performance killer: unlike the other tables, they are searched on a linear basis. A search in a standard table with 50,000 data records will therefore start from the very first data record. In the worst-case scenario where the requested data record is at the very end, all preceding 49,999 data records will be read.

The example below illustrates just what impact this has in lookups:

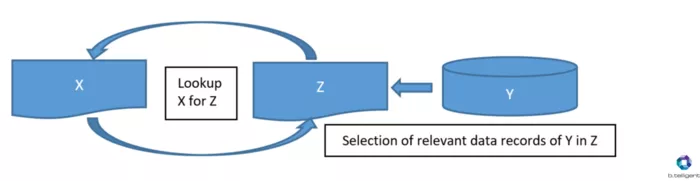

Imagine that we have an internal table, X, with 50,000 data records, and that each data record is to be supplemented with information from a database table, Y. In the best-case scenario, we have only loaded the relevant data records from database table Y to an internal table Z [Verlinkung zum Artikel 4], and the latter contains exactly one corresponding data record for each of our X data records. Z thus also has 50,000 data records.

The type of table X is of secondary importance, as we need to LOOP through it and read out every single data record. If, however, Z is a standard table, we will have a total of 1,250,025,000 (50,000!) instances of read access. With SAP BW on HANA, you are often working with data packages with 200,000 or more data records. In our example, this would take us to 20,000,100,000 instances of read access per data package if we used a standard table for Z.

Of course, standard tables can also be sorted in ascending order and then searched using a BINARY SEARCH[SC1] . So why not use a sorted or hashed table? As a rule of thumb, there is little difference in speed between the two types of table with c. 10,000 data records. For significantly larger tables, the hashed table is unbeatable as the access time is more or less constant irrespective of size. Performance problems do not generally occur with fewer than 10,000 data records.

The 'disadvantage' of hashed tables is, however, that they are only supposed to contain unique data records. If it is not possible to generate a unique table key, for example because the selected data has been aggregated, you should use sorted tables.

In short:

- If you need an internal table for writing, use a standard table.

- If you need to search a large internal table, use a hashed table.

- If you need to search a large internal table with non-unique data records, use a sorted table.