This end-to-end and step-by-step quick demo shows you how to connect an existing on-premises data source to a modern cloud data warehouse solution such as Snowflake:

- Easily

- Fast

- Securely, and

- Without having to write a single line of code.

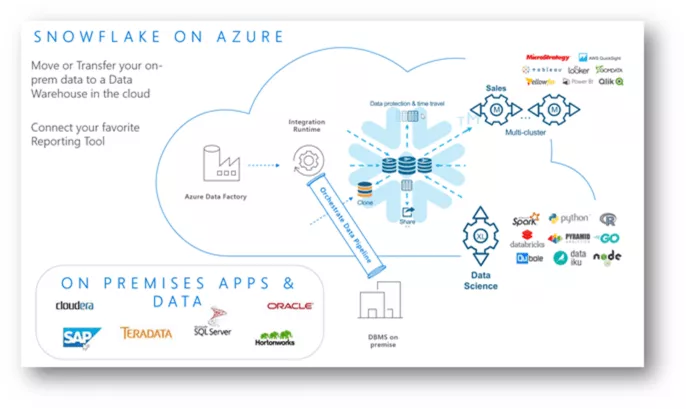

Furthermore, it demonstrates that Snowflake on Azure is a great combination of the Azure data factory, Azure functions and Microsoft Power BI, besides other well-known connected services in the Azure cloud ecosystem, including the single sign-on feature with the Azure active directory. It also shows that it is possible to build a hyper-scale enterprise data analytics solution with a dual vendor strategy.

The Azure data factory can perform both, ELT and ETL tasks. Currently, there is no native Snowflake open source connector available in ADF V2. However, with the Snowflake.net Driver several options are possible for workloads with Azure functions and custom activities, together with Azure data factory pipelines.

Scenarios depend on many factors and circumstances. In our case, the sources are mostly relational database management systems. For several reasons, we do not want to extract data from our source system to a csv file, and then transfer the csv to cloud blob storage and load these into Snowflake with native connectors. However, this would still be a highly valid and common scenario.

We want to go for a scenario where we have directly linked both databases, using the Azure data factory to load the data directly from the source tables into Snowflake tables. After loading the data, we want to use Microsoft Power BI to analyze the data with a live connection to the analytics model. This direct connection to the data warehouse has the advantage that we do not need to reload the data out of Snowflake.

Tools and services used for the demo:

- SQL Server 2017 database, on-premises

- Azure runtime integration, on-premises

- Azure data factory V2 pipeline (integration runtime configured)

- Snowflake data warehouse

- Power BI

Background: Architecture on Azure – how we built it:

https://www.snowflake.com/blog/how-we-built-snowflake-on-azure/

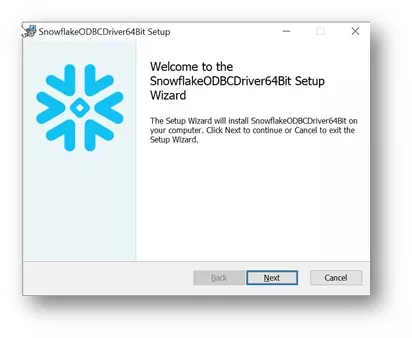

Install the Snowflake ODBC driver

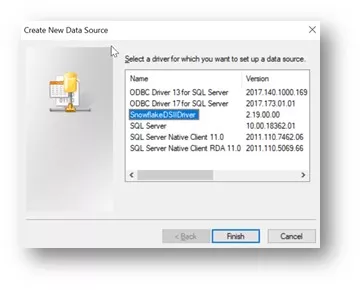

Open ODBC data sources (64-bit) and create a new data source

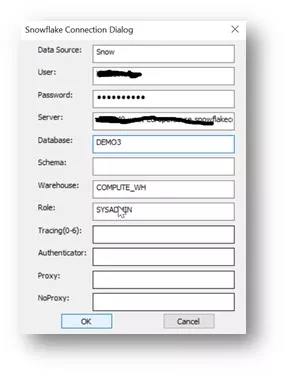

Enter the details into the Snowflake connection dialog

Install and configure Azure integration runtime

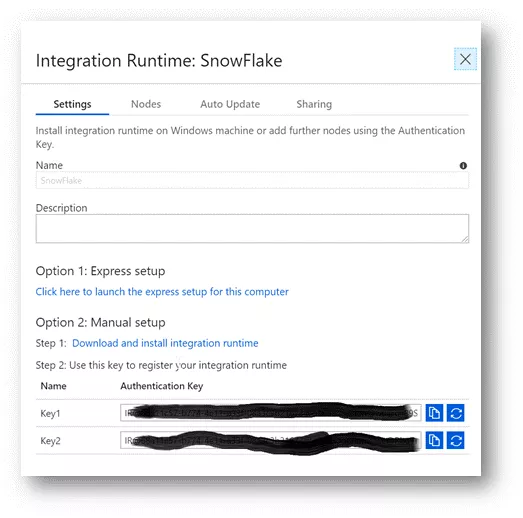

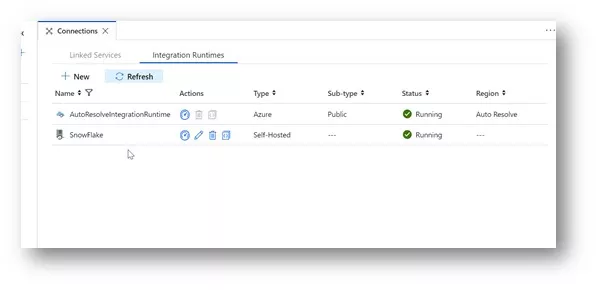

- Create and configure integration runtime in an Azure data factory V2

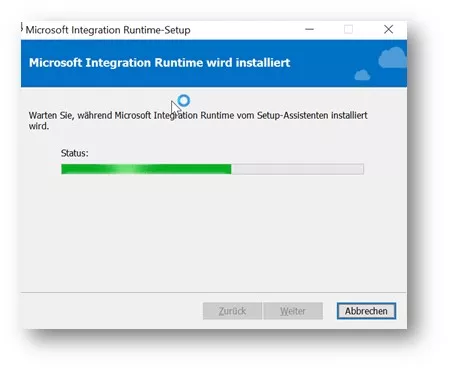

- Install Azure integration runtime, on-premises

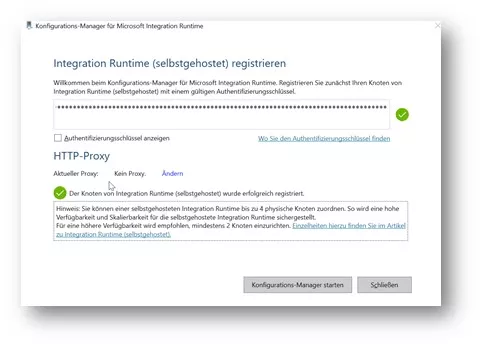

- Register the runtime and enter the authentication key from the ADF V2 runtime created earlier

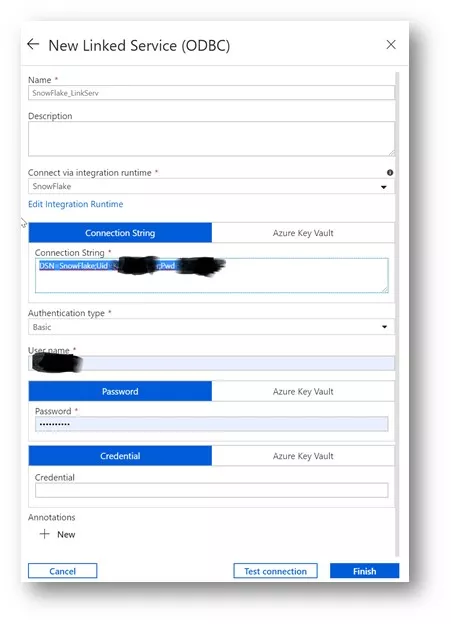

Create a new ODBC-linked service in the Azure data factory

- Configure the ODBC-linked service by entering the data source name, user name, and password created earlier

- Test the connection from the Azure data factory

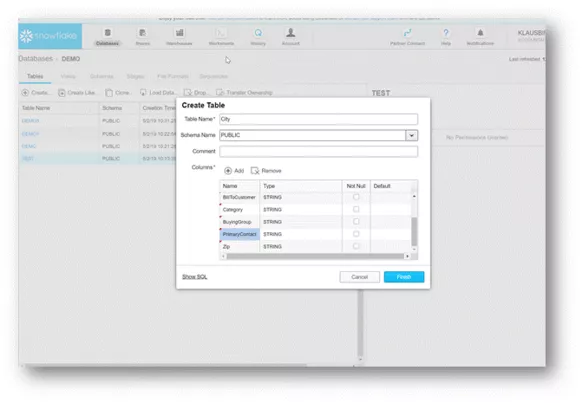

Create a table in Snowflake

Data types are irrelevant in this quick demo.

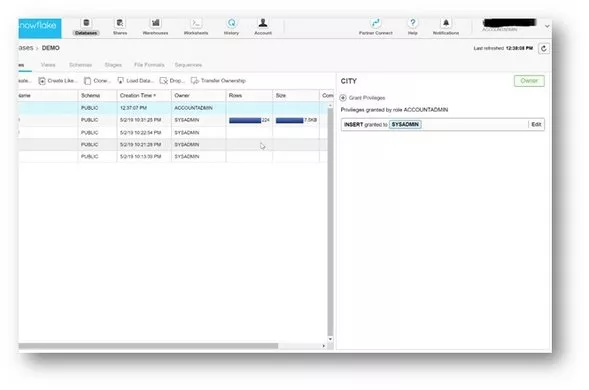

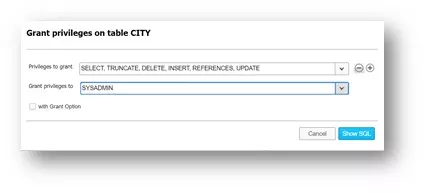

Grant proper access rights, in case they are needed. For this demo, we do it quickly, which is normally not recommended:

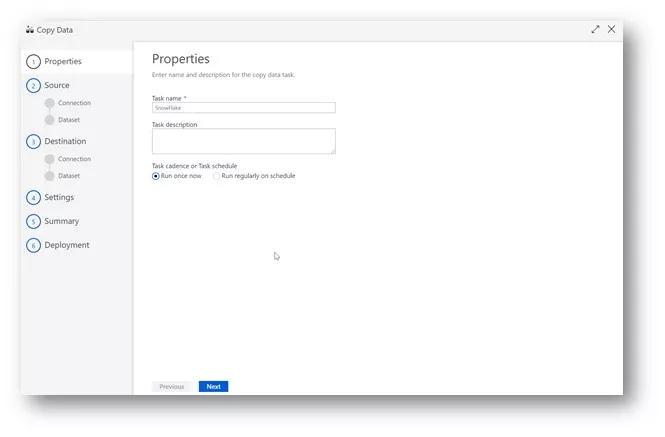

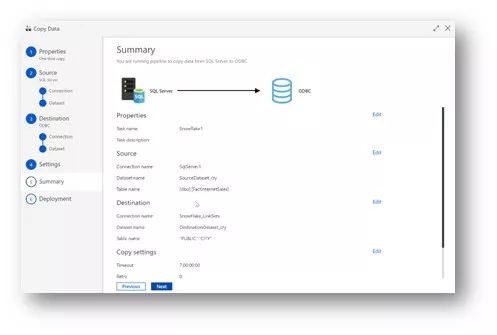

Copy the data wizard from the Azure data factory V2

- Source SQL server 2017, on-premises

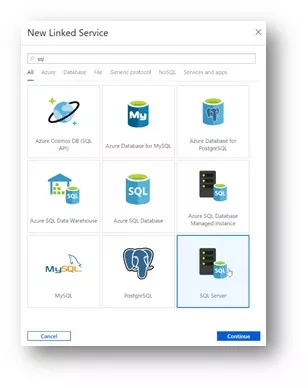

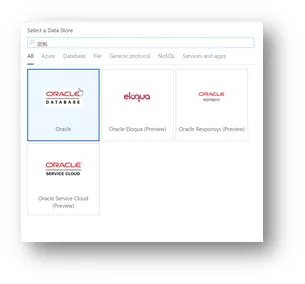

Create a new linked service to an on-premises SQL server. Any linked service that supports Azure integration runtime is available here, including Oracle, Sap, MySQL, Teradata, etc. Or you can connect easily to Snowflake with a direct load through the Azure data factory.

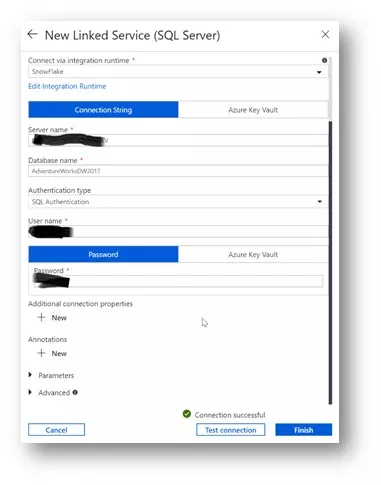

Enter the server name, reached through the previously configured Azure integration runtime, and get authenticated. Test the connection.

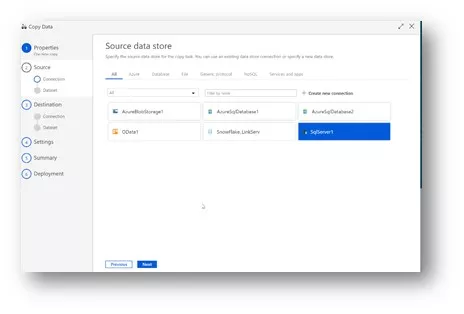

Now select the recently configured on-premises data source.

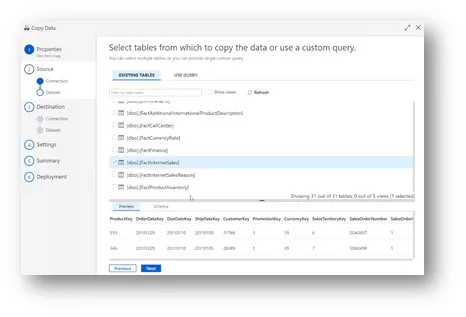

Select your data tables from your on-premises data source

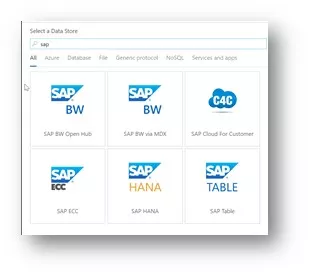

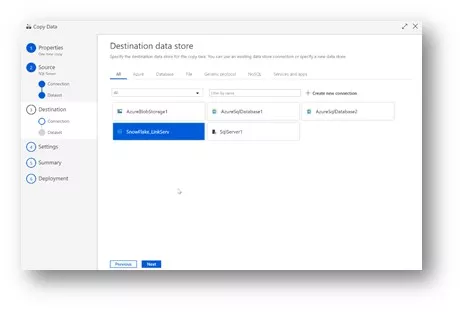

Select the Snowflake linked service created earlier as the destination

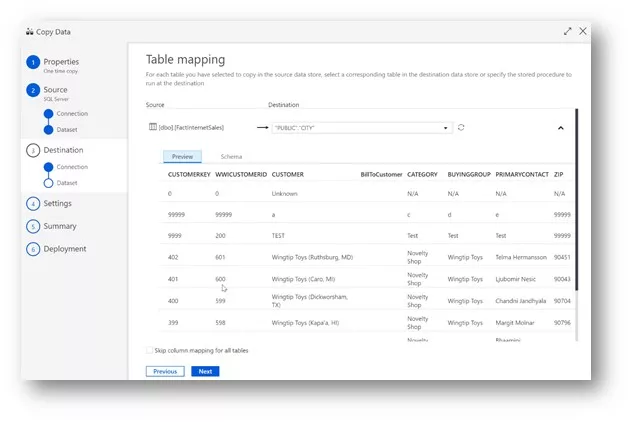

Map the selected tables

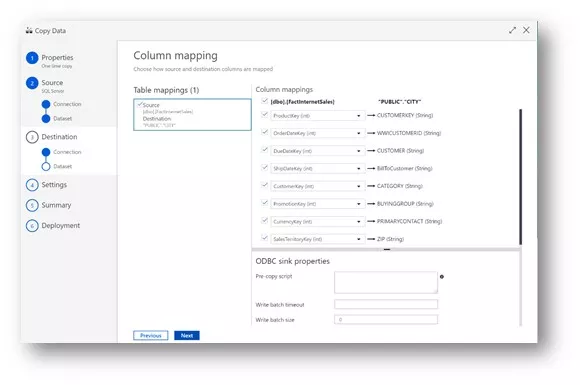

Map the columns between the tables

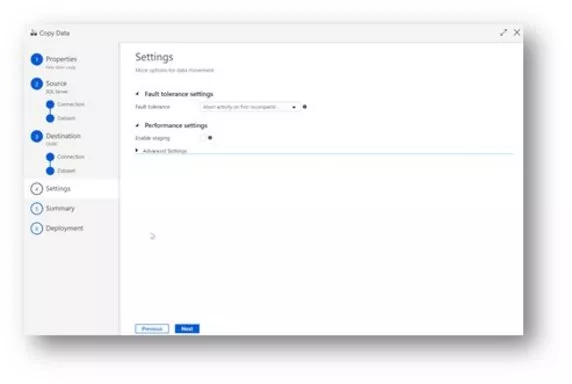

Settings

Summary

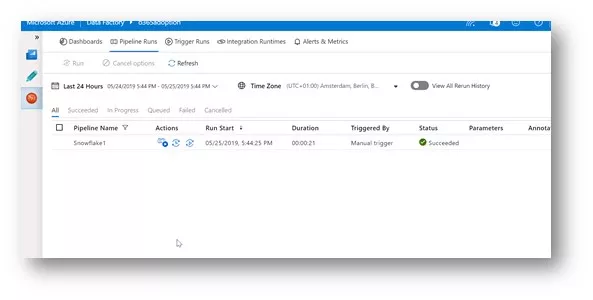

Let the pipeline run

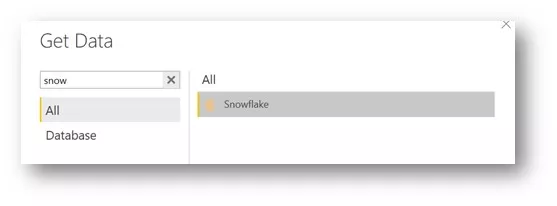

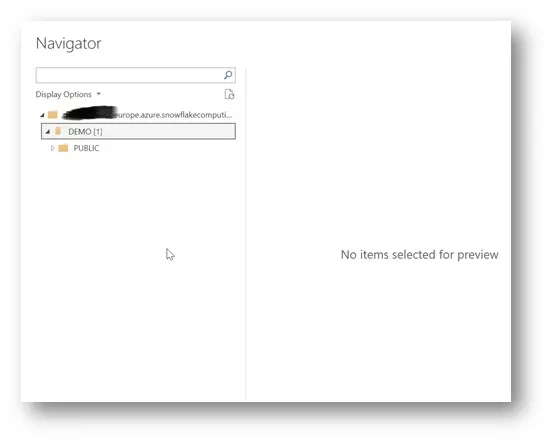

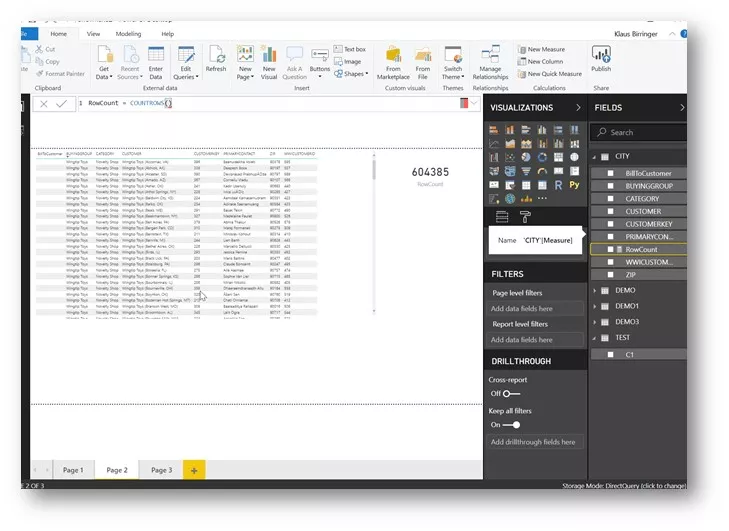

Connect with the Power BI direct query

Conclusion

- Connect quickly and easily to almost any on-premises data source – without having to write any line of code, whatsoever.

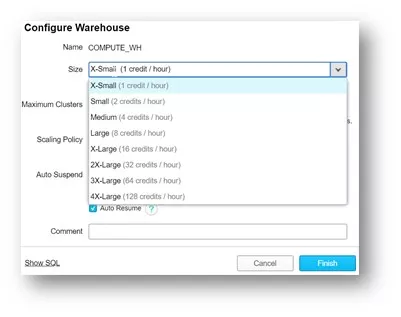

- Although it may not appear as such, the warehouse size does have a measurable impact on ETL/ ELT workloads on reporting workloads. This means the concept of using different warehouse sizes for different workloads or user groups makes absolute sense.

- Currently, there is no native Snowflake connector available in ADF V2, but there is a .net driver that can be used to create your own.

- The demo showed that Snowflake on Azure is a great combination of the Azure data factory, Azure functions and Microsoft Power BI, besides other well-known connected services in the Azure cloud ecosystem, including the single sign-on feature with the Azure active directory. It also shows that it is possible to build a hyper-scale enterprise data analytics solution with a dual vendor strategy.

- Larger data volumes could take longer to transfer, depending on various factors.